When I started my AI Masters’ degree in 2023, I said to the director of the program “If no more advances happen, it will still take years for what has happened to diffuse through society.”

Suffice to say, more advances have happened since.

The paradox is that as AI tools have become more capable, there is more work to be done to figure out how to apply them. People need to figure out how to use these tools as leverage while actually doing their jobs at the same time, and the target keeps moving.

I believe this requires a new kind of role at organisations.

I’m not sure exactly what will shake out as the industry standard for what these roles should be called or what, specifically, their job descriptions might look like. They could take the form of a Chief Acceleration Officer or Engineer, a Head of AI Enablement, or AI Enablement Engineers. All of these roles, and more, have been posted at one time or another at companies.

Since these are not necessarily novel ideas from me, what value am I adding here?

The point of this article is to give a sense of how important roles like these will be in the near future and how they could work within organisations at scale. The structure and roles that organisations should create to absorb AI changes is emerging. Organisations would have to decide for themselves which roles to take on, but the goal of the roles would be the same: enabling AI adoption to achieve org-wide acceleration.

The Current State of Adopting AI

Zuckerberg is building an AI Agent. FedEx has enlisted Accenture to help upskill their employees in AI. Intercom has a directive to increase productivity 2x. These kinds of moves all hint toward a hunger from company leadership across all industries to adopt AI and see their teams adopt it.

“Adopting AI” is an ambiguous, ill-formed term. It doesn’t mean very much on it’s own. Maybe it means everyone in a company will self-serve and build their own AI agents. Perhaps it only means a few employees will go to some training. Maybe leadership just sets a target and hopes people get on board.

Leader’s typically want org-wide outcomes, such as increasing profitability, driving revenue, scaling internationally, or (in the case of government or non-profits) helping as many people achieve their goals as possible. The intuition is that “AI will make everyone more productive,” and that’s probably true. But in the sense of org-wide outcomes, individual super-productivity can create issues as quickly as they are solved.

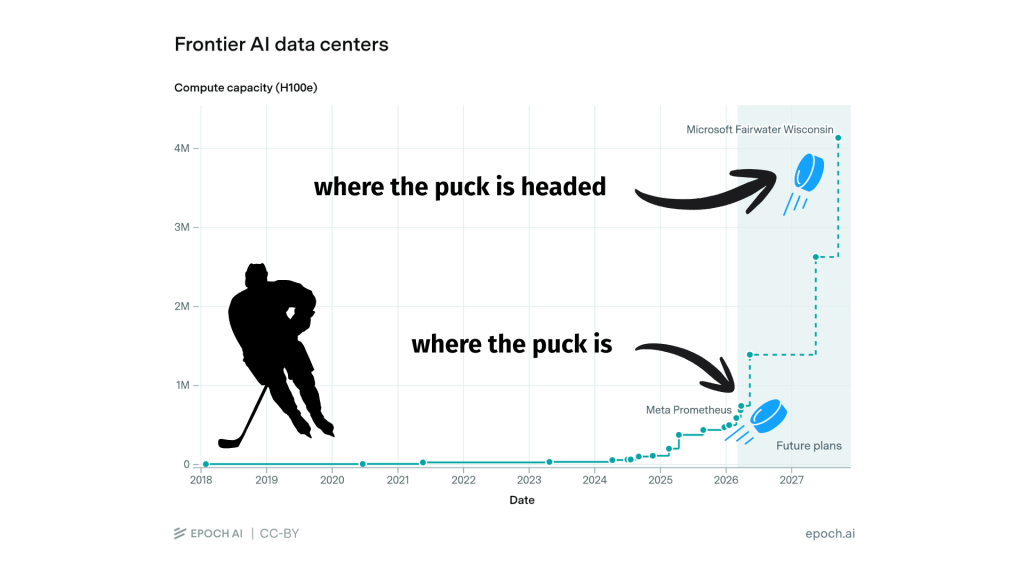

Leaders, instead, probably want more work to be done rather than each person being more productive. With AI, the puck is headed in a direction where individual productivity is only part of the story of what “adopting AI” looks like, and it may not be the most important part.

What Does it Mean To Adopt AI?

“Adopting AI” is a Rorschach test. To some it means enabling your teams to use AI to do their current jobs more efficiently, to others it means incorporating AI into the organisation’s products or services, and for still others it means overhauling business structures and incorporating agentic AI to fundamentally rethink how business works.

What this reveals about the person is whether they are primarily people, product, or process oriented. I mean to address the adoption of all three in this article. In my view, improving all three can happen simultaneously.

Progress So Far Seems Slow

Anyone who has used AI can see first-hand how powerful it can be. It does a bang-up job of search, generating text, translating, synthesising information, summarising, and pretty much any text-to-text work that needs doing. It also is great at code review and generation, for the simple reason that it does pattern matching at an unbelievable level.

This does translate into productivity. However, the evidence suggests measured productivity gains are smaller than you may expect. In recent studies, productivity with access to AI tools have had an average uplift of between about 1.4% for general office productivity to 26% (+/- 10%) for software engineering, with plenty of evidence for values between those two numbers.

This may not square with people’s personal experiences. Personal experience may suggest that automation and tool use could increase productivity at a rate of multiples, if these tools could be leveraged well.

I agree, and there’s evidence to suggest that it’s not simply perception. I believe there are structural limitations that need overcome and an adjustment to what ‘productivity’ means.

The Capability Overhang

Peer-reviewed studies take time to gestate, so while the studies above about worker productivity were published recently, they only represent the state of play as of a year ago or so. AI capabilities have dramatically changed since then.

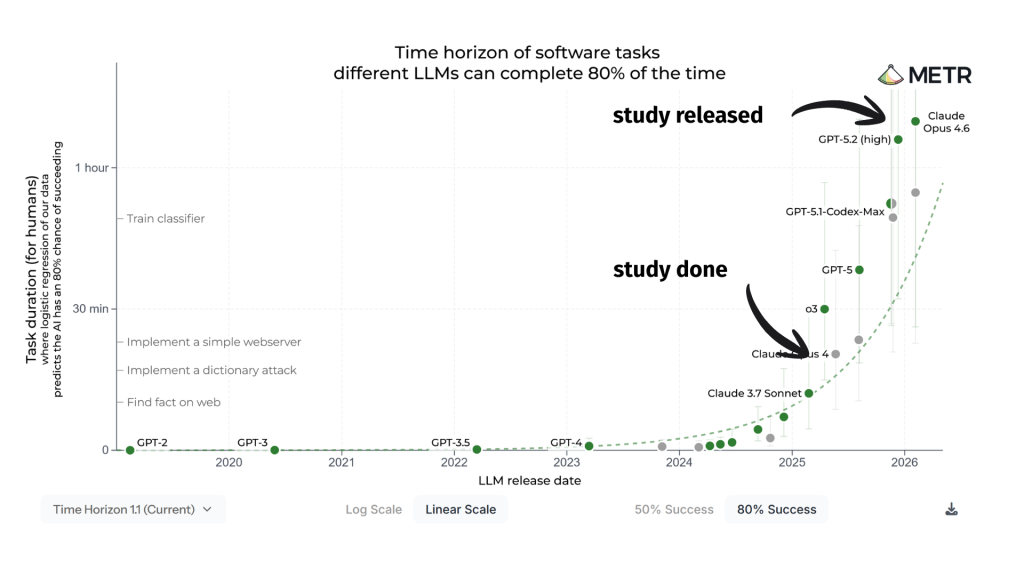

One of the most telling illustrations of this is the “Time Horizon” graph from METR, which shows that AI can take on tasks that normally take humans about an hour to complete with an 80% success rate. A year ago, it was 10 minute tasks. I think it is fair to read that as a 6x uplift in capability within a year.

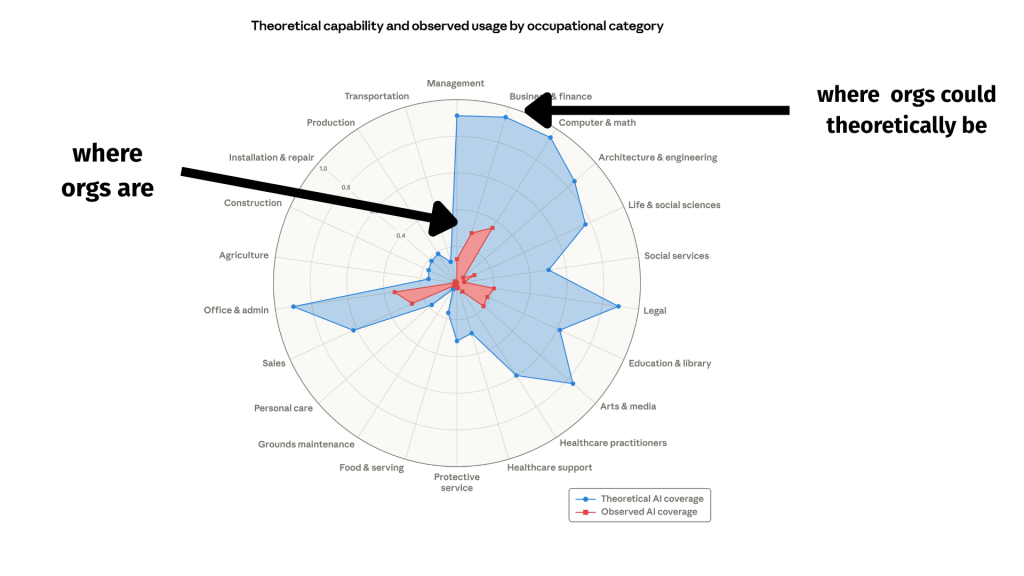

While models are improving at an exponential rate by this measure, organisations largely have not been able to take advantage of the potential. This appears in what is called the “capabilities overhang.”

This begs the natural question: why is there significant and growing distance between theoretical capability and applied coverage, and how can it be resolved?

Why Adoption is Slow

The reasons why organisations have not yet been able to absorb the growing capabilities of AI winds itself throughout the structures of businesses. Effective AI adoption requires effective continuous change management, not from one system to another as in onboarding a new tool, but via experimentation with no clear end state in mind. For organisations that love well-scoped projects, this can be mind-bending and process-breaking.

Until AI capabilities stabilize, which seems unlikely to happen in the near future, this will be an ongoing process of trial and error.

Let me share my perspective on what gaps exist, provide some supporting evidence, and in breakout boxes give an idea of what a hypothetical AI Enablement Engineer role might do to help close these gaps.

The Process Gap

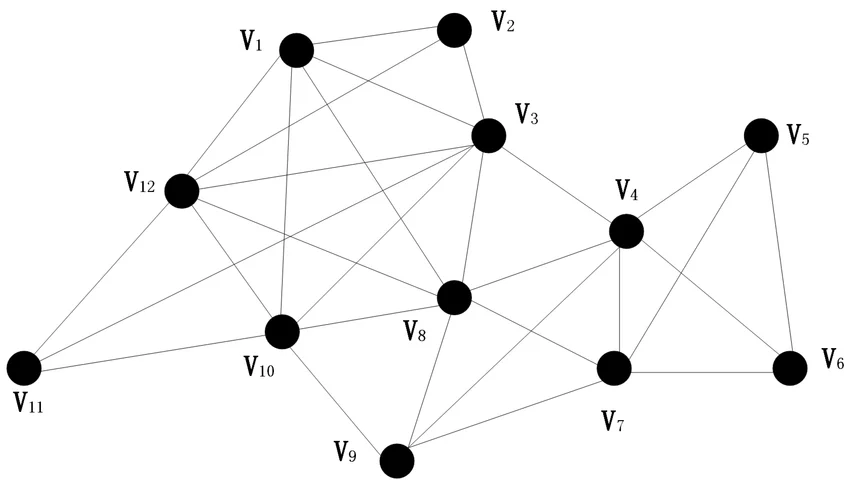

Systems are made of nodes and edges; flowcharts and networks through with inputs enter and outputs leave. Businesses are an economic engine, a particular type of system, that ideally creates more power (better products and services) efficiently (profitably). AI can help with both, if put to good use, but processes within a business need to compliment and support each other. With rapid acceleration, it can feel like pressure is put on all parts of the business and it’s imperative to know if real work is being done.

Structural Limits

While organisations are typically mapped as a top-down hierarchical org chart, communication within companies happens in networks. This can be represented as relationships between any combination of individuals and teams, and their interactions with others.

If giving AI tools to everyone in the organisation, you’d expect that more work would get done.

It seems to, but it also results in increased ‘work slop’, or more work being done by each node being passed to other nodes in the network to deal with. As an example, one study found that for experienced software engineers, productivity in their own tasks can decrease as they need to review other’s code more.

If one team’s output is another team’s input, each node can appear more productive, but systematically little more seems to be getting accomplished. This is is the land of very promising demos and lots more reports, but no great acceleration in sales or product development.

An AI Enablement Engineer would at times embed within teams to collaborate and advise on what and how to automate work with AI. A CAO may consider how teams affect other teams, and think about how to increase throughput of the whole system. They champions for overall process improvement, not just one node.

Governance

Access to information is critical for AI; context is king. In most organisations though, information access is carefully controlled. Whether by legal, ethical, or contractual bounds, information must not cross-pollinate in certain ways. This is an extremely challenging problem under normal circumstances, and with the advent of AI, it becomes even more-so.

An AI Enablement Engineer would build or buy and configure tools to ensure that patterns and processes for AI access to data is meaningfully controlled. They could experiment, evaluate, and advise on adoption of tools like Merge , Composio, or Jentic for giving AI controlled access to APIs, and build out the capability to self-serve remote desktop environments with project- or customer-specific data access.

Security

LLMs face new problems, such as prompt injection and supply-chain attacks. OWASP maintains a list of top security risks, and lists approaches that can be used to help mitigate them. Currently at the top are prompt injection, sensitive information disclosure, and supply chain vulnerabilities.

An AI Enablement Engineer would regularly review the security risks and work with platform teams to mitigate them. They would help build sandboxed environments with controlled access to the internet and supply chain vulnerability scanning for responsible agentic AI use, accessible to even the least technical employees.

The People Gap

People are the lifeblood of organisations; they hold the domain knowledge, creativity, empathy, critical thinking, and understanding that no LLM can replicate. Whether your organisation can keep pace with AI progress hinges on people being able to get up to speed with why they may benefit from using AI and how to use it appropriately in their roles. Time and space need to be made for learning and experimentation. The barrier to entry needs lowered while at the same time everyone needs to upskill.

Attitudes

AI is not just a useful tool, for some it carries a lot of baggage. The political and technocratic environment in which the foundation models are being created and iterated on, the tech CEOs promising that AI will ‘replace’ people, the concerns about energy prices and environmental costs are all creating a cloud of anxiety around the technology itself.

Most people (in the USA anyway) are more worried than hopeful about future AI use in the workplace.

For some, adopting AI is a question of identity. If your role has relied on doing certain tasks in the past, such as writing code, and there’s a high likelihood the value of those tasks being done by people in the future will be diminished, you may see it as a threat to your identity. This is especially true of narrow roles that have not changed much in the last few decades.

To approach rapid change with some humanity and some humility is required. People forget that the mechanics of work, the sending of the email or the writing of the code, is not the work itself. The work is solving a problem. The mechanics is the means by which we solve this problem.

Until we run out of problems to solve, which at this stage seems unlikely, we have to have people. Unless you are an AI agent reading this, I hope you agree. The difficulty lies in change management. It is in finding ways to show every employee that while the mechanics of the work might change, the work is still there.

I have seen first-hand how this friction dissolves. As a consultant many moons ago, I would walk into rooms with suspicious if not antagonistic employees who would not be interested in what I had to say. The antidote was straightforward, but took a force of will because it is the opposite of what many think consulting is: project authority, show knowledge, present convincing arguments.

Instead, the solution is simply to be humble, realise you don’t know what you don’t know, and work side-by-side with people and help them solve their problems. The best employees can also be some of the most resistant to change. By helping them solve their problems, when they see the value, they more eagerly start dipping into the more typical toolbox of formal training, demos, and access to tools.

It’s important that support is intentional and directed, but compassionate and empathetic.

AI Enablement Engineers must be able to work side-by-side with people to understand their goals and what makes their work frustrating. Immediately following learning, listening, and understanding, AI Enablement Engineers would introduce and support AI tools to help solve employee problems. This is a human-centric, technology-supported role.

Knowledge

Having access to AI tools such as ChatGPT or Claude Cowork is not enough. Having the knowledge to make use of them is key. This can be done via side-by-side work, hands-on workshops, and curated links to resources. Most companies don’t need custom educational materials for off-the-shelf commercial tools.

For internal tools, knowledge of how to use them and discoverability is key. I’ll say more when we get to “The Product Gap.”

AI Enablement Engineers would maintain curated help docs, FAQs, and offer hands-on support via office hours and workshops. Enablement means making sure you meet people where they are, and give them a hand in where they can go. For internal tooling, access and an exceptional user experience is key to enablement.

Mastery

Mastery implies outsized competency, and it implies deliberate practice. I’m aware most people are not interested in gaining mastery “in AI.” I think this is similar to not necessarily being interested in gaining mastery in “computers” or “cell phones,” yet everyone uses them. People typically learn as much as they need to do their jobs, hit a plateau, and decide if they want to keep learning. I think the role of an AI Enablement Engineer would be to help guide and support learning as far as people want to go, and show them what is beyond their current plateau. AI Enablement does not presuppose that gaining mastery in AI trumps all else that a person is doing in an organisation, but it should be clear what gaining mastery can give them.

AI Enablement Engineers would continually show what the “art of the possible” looks like, pushing the envelope and experimenting, to ensure that people who want to keep gaining mastery in AI have an idea of what they can do with it. They also can make sure people have the support, tools, and access to enable experimentation on their part as well, as novel ideas for use of AI will come from all areas of an organisation.

The Product Gap

Internal tool development (the platform) and external tool development (the product or service) can both be radically altered when AI is introduced. By dogfooding AI internally, using the latest tools and processes, product development may occur naturally as a byproduct. By experimenting, the knowledge of what to buy vs. build may become clear.

External Product

While AI is often seen as a ‘tacked on feature’ of modern SaaS software, often viewed with derision, it is an open question as to what the future of AI within traditional software looks like. As with anything in products, the decision for what to include must be shaped and prioritised based on information by users, but not dictated by them.

In this arena, AI Enablement Engineers can a resource for showing what is possible within the realm of AI and may inform how features are developed by product teams. In this case, AI Enablement roles would be in a good position to inform product teams on what is coming and what to pay attention to.

Internal Platform

The platform are the tools used by internal employees, whether dashboards or self-service deve environments. Specialised tools, such as agentic AI or autoresearch primitives, could be developed by AI Enablement Engineers. These could help other teams deploy agents that interact autonomously with one another, safely and with little technical know-how.

I have found that the gap between what is possible and what is used is often the barrier to entry. How easy is it to get started? The user experience of internal tooling can make a huge difference to adoption.

What Success Looks Like

With every new step change in AI capability comes new possibility in businesses. At first it was being able to summarise docs, and now truly useful autonomous agents are right there. Models and tools have exploded in capability. Next, autoresearch, agentic swarms, and self-improvement loops are on the horizon.

Success for these AI Enablement roles looks like everyone in the business getting access to tools that can take advantage of these new capabilities, understanding what they are capable of, and being able to apply them with confidence. This implies they can do so safely, with knowledge and understanding of how they work, and with the ability to audit and evaluate performance.

The human element must be put on the forefront. People who are learning, have the time and space to think deeply about their work, and are able to collaborate with others on the most challenging parts of the work without becoming burnt out is critical. In my experience, the problems of ‘brain fry‘ and the ‘comprehension gap‘ are real. Being able to support not only AI for more short-term productive output, but AI in support of deeper comprehension and personal growth are key aspects to the role.

Ultimately, success is fulfilled people solving difficult and important problems with each other. I hope that never changes. Because AI is scarily capable, I think people get worried. But ultimately, until we run out of problems to solve, we still have work to do.

And I want to be very careful about what I mean by work. For an example, work for an airplane manufacturer is not designing a tail fin, it is getting as many people to their desired destinations as quickly, safely, and sustainably as possible. With such a lofty goal, there is no end to the work no matter how much AI is deployed. I find that reassuring.

The Strategy

The strategy of a Chief Acceleration Officer may look different depending on the organisation they operate in, but they do have to keep their priorities straight. This is change management, in flight, at scale, without an established playbook. Rather than a rigid process, it requires adaptability.

Rather than adopting a strategy right away, I would suggest starting with tactical applications and growing the muscles of implementation needed to form a higher-level strategy. If just starting out, before hiring AI Enablement Engineers under a Chief Acceleration Officer, I would suggest the CAO work with several teams to tactically deliver value while gathering information to inform strategy.

As an example, here’s a sample agenda to gather information while also providing solutions, which would take place over six weeks. This hands-on approach would be a trial run for a Chief Acceleration Officer, and should be delivered by them. Once the approach is ironed out, it could be scaled up to trained AI Enablement Engineers to carry out in other teams.

Week 1: Gather Issues from One Team

Working with one team, ideally a ‘friendly’ one eager to adopt AI, make sure everyone has access to the primary tools needed to adopt AI. Access, of course, is the first step. This could be Claude Code, CoWork, Gemini, ChatGPT, GitHub or Windows Copilot, whatever aligns with the org’s existing tooling choices. The specific tool at this stage is not necessarily the important part.

Then, work side-by-side with a leader and employee in the team for a week. Write down what processes they do, where pain points are, and what problems they are facing. Note, especially, iterative work and where bottlenecks occur. Show them tactical improvements that can be made, such as better prompting or MCP tool access. Make sure to provide value in addition to working alongside them in the work. Sitting in on meetings and pairing on tasks that could involve AI is a valuable exercise.

Week 2: Co-Create A Strategy

Review with the team leader the processes you think are ripe for automation or semi-automation with AI. Also identify non-AI automation or process improvements that could help support AI, such as removing unnecessary steps in their process or deterministic scheduled jobs that could be created. AI can help build deterministic automation, and this should not be ignored as a potential improvement to process. Co-create a Skill to help solve one of the problems and make it available to the whole team. Sit with a team member as they use the Skill, and note the issues with it. Show them how they can iterate and evaluate Skills, and support them in doing it. Help the team prioritise future Skills-to-be-made.

Endeavour to meet with everyone on the team and help them with some aspect of their jobs. At the end of the week, meet with the team leader to discuss how to instrument and measure progress.

Week 3: Instrumentation

When a measure becomes a target, it ceases to be a good measure. – Marilyn Strathern quoting Goodheart’s Law

If you truly believe that AI has the ability to accelerate organisations an individuals in multiples, as I do, instrumentation is critical. I am choosing this word very carefully. Instrumentation is what you need in high-acceleration vehicles like rockets and race cars because as velocity increases, you need to know that everything is moving healthily. How is the oil pressure, engine temps, and RPMs?

The goal of a drag racer is not to achieve optimal oil pressure. It is to accelerate as quickly as possible in the correct direction. But without the oil pressure displayed clearly on the dashboard, things can go wrong very quickly.

I believe this to be true in teams as well, for what we’re about to do. If AI can help a sales team gather more qualified leads, that’s fantastic. But if that doesn’t translate to more revenue and happy customers, there’s a problem. Instrumenting the sales funnel and ensuring that AI doesn’t just fill up the calendar with sales calls that lead nowhere is critical. If instrumentation is not in place for a team, accessible for every team member to see, things can get hairy quickly when AI is introduced.

For software engineering teams, instrumentation might include Pull Requests made, time between support tickets created and resolved, deployments to production, and bugs introduced as a ratio with the number of contributors as the denominator. A dashboard showing trends is critical to understanding how we’re accelerating: are we introducing new features at a greater rate, but at the expense of quality?

This is especially important as perception changes over time. Having some verifiable, unfudgeable data can help inform if we’re really making more bugs or if we’re just getting through more features faster and introducing bugs at the same ratio. Customers an stakeholder’s qualitative responses can also be part of the dashboard through sentiment analysis, if you have access to such information. But keep in mind that the hedonistic treadmill effect may play a part here. If people get used to features being delivered at 2x or 3x rates as compared to a year earlier, they may be disappointed if that rate fluctuates (even though you’ve very clearly shown AI has helped deliver more value more quickly).

Week 3 is about establishing instrumentation with no particular opinion about goals, and building out a dashboard to keep track of it. Making those stats available to AI via CLI/APIs is also critical to build in a positive feedback loop.

Week 4: Access & Governance

To make sure that employees get the most from their tools, access to systems such as Microsoft Teams or Slack, email, CRMs, etc. should be made available. However, each of these bring challenges. Start the process of opening up access, starting with the safest places first. Identify how you’ll allow agentic AI access at different levels than employees, which is critical for building out autonomous systems. Make sure that people within the team are able to give access to their AI to their most critical sources of context, and start sketching out how you’ll build similar access for agents but scoped to more granular roles. The goal for the end of the week would be that every employee on the team is able to connect their interactive AI tools to their most-used systems.

Week 5: Deploy an Agent

This is simple, work to establish a way of deploying agents and then do so, with one automatable or sem-automatable task being introduced. Do some due diligence and find the highest ROI task that can be automated within a week, and build it. Make sure this is something that ends up in production and can be supported. The lessons learned here will prove valuable when going into other teams.

Week 6: Co-Identify Gaps and Plan Self-Serve Process Improvement

In the final week, it’s important to carry water back to the rest of the C-Suite and give them an idea of what on-the-ground problems are being discovered. While only viewed at through the lens of one team, the challenges around governance, security, deployment capabilities, and more will have reared their ugly heads, giving you a grounding to set a strategy and start working through the highest priorities to remove roadblocks to AI adoption and org-wide acceleration.

Role Descriptions

Chief Acceleration Officer

This is not a unique or original idea, and has been proposed as far back as the year 2000. The most relevant article I could find recently was written in 2016. This idea has not taken off, and I might be re-proposing something that will never be adopted, but I see that our feedback loops are getting much tighter than ever before and the rate of acceleration is increasing. This is one idea who’s time may finally be shining.

I think the 2016 article covers the responsibilities well, suggesting the responsibility is to drive change, not people, and to provide change leadership as a catalyst. I hope the above illustrates what that could look like in practice, at least as of March 2026.

Chief Acceleration Engineer

This would be a more technical flavor of the above. They would be responsible for the technical implementation strategy and may report to the CAO. It would be critical that they work with an existing CTO, and may even instead report to them.

Head of AI Enablement

If organisations would prefer to presuppose the primary mechanism for acceleration as Artificial Intelligence, they may prefer a more focused alternative to the more wide-ranging titles with Acceleration in the name. A Head of AI Enablement would likely report to a CTO or VP of Engineering with the goal of onboarding and supporting AI across the organisation. This is far more specific than Acceleration, but in practice in the short term the differences between what a Head of AI Enablement and CAO or CAE would likely be very slight.

AI Enablement Engineer

Similar in skillset to a now-well-understood Forward Deployed Engineer, or ‘technical consultant’, this role involves being able to hold deep technical expertise and implementation know-how with a strong communication capability and empathy for others. Roles with this title have been posted before to Indeed and LinkedIn, but the responsibilities are not fully formed yet.

I imagine those in this role could be embedded in teams for short stints to co-create solutions to help serve them, leveraging existing tools from platform teams or building wholly new primitives to be leveraged. These may exist on a cross-functional team alongside legal experts, more generalist software engineers, infrastructure experts, and product-minded professionals to deliver end-to-end solutions for other teams as well as build the tools they can self-serve.

The Puck

Organisations need to be headed for where the puck is going, and in this case, the puck is moving fast. To do this, they need to rethink how organisations work. In the future, there may be the layer that people operate in, and the layer that autonomous agents operate in. Like Disney World with it’s underground utilidors system moving supplies and garbage across the part out of sight, agents may exist moving information automatically across teams and solving problems out-of-sight and out-of-mind, letting employees work at a higher level of abstraction on the more fun and interesting problems.

That kind of change requires a lot of change management, and change management as a term already feels reactive. What it requires is acceleration management and AI enablement. To head where the puck is, organisations need to rethink roles and responsibilities.

FAQ

tbd – ask me questions on LinkedIn so I can fill this section out!